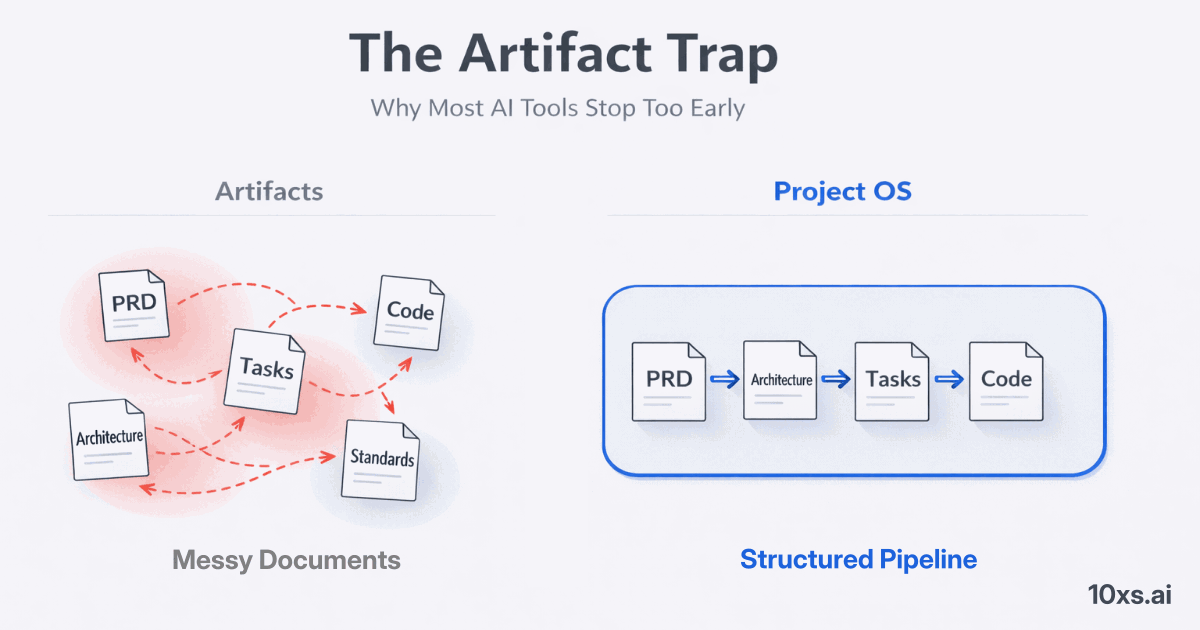

The Artifact Trap: Why Most AI Tools Stop Too Early

Everyone Has a PRD Generator Now

Open any AI coding tool and ask it to write a product requirements document. In thirty seconds, you'll have something that looks professional: user stories, success criteria, technical constraints, a scope section. It's impressive the first time. By the fifth project, you notice something uncomfortable.

The PRD sits in a Google Doc. Or a Notion page. Or a markdown file that nobody references after day one.

The architecture diagram gets drawn in a whiteboard session, exported as a PNG, and never updated. The task breakdown lives in Jira tickets that drift from the original design within a week. The coding standards exist in a wiki page that new team members read once and forget.

This is the artifact trap. AI tools have become extraordinarily good at generating engineering artifacts. But generation was never the bottleneck. Execution is.

The Gap Between Documents and Delivery

Here's what actually happens on most AI-assisted projects:

Week 1: Team generates a PRD with AI. It's thorough. Everyone's excited.

Week 2: An AI coding assistant starts building. It doesn't read the PRD. It writes a 400-line component because nobody told it about the 200-line file limit. It uses Redux because that's what was in its training data, not because the team chose Zustand.

Week 3: A different developer opens a new session. The AI has no memory of last week's architectural decisions. It suggests a completely different folder structure. The developer, under deadline pressure, goes with it.

Week 4: Three sessions in, the codebase has three different patterns for the same problem. The PRD is already outdated. The architecture doc describes a system that no longer exists.

The artifacts were generated. They were even good. But they had no mechanism to govern ongoing execution. They were write-once, read-never documents.

Generation Is Solved. Governance Is Not.

The industry has spent two years building better generators. Better PRD writers. Better architecture diagrammers. Better code generators. And they work — the quality of AI-generated artifacts is genuinely impressive.

But generation without governance is like having a constitution that nobody enforces. The rules exist on paper. Nobody follows them. Not because they're bad rules, but because there's no mechanism to make them operational.

What's missing isn't another generator. It's a governance layer — something that sits between the artifacts and the execution, ensuring that what was decided actually gets followed. Across sessions. Across tools. Across team members.

What Governance Actually Means

Governance isn't bureaucracy. It's not adding process for the sake of process. In engineering, governance means:

Persistent context. Your AI assistant knows that this project uses Next.js 15 with the App Router, that files stay under 200 lines, and that tests go in tests/ — not because you told it today, but because that decision is encoded in a structure the tool reads automatically.

Role separation. Architects design. Developers implement. The AI doesn't blur these boundaries because the governance layer defines what each role does and when.

Quality gates. A task isn't "done" because the AI said so. It's done when tests pass, file limits hold, complexity stays within thresholds, and there's actual evidence — not just assertions.

Session continuity. When a developer picks up work tomorrow, the AI doesn't start from scratch. It reads the handoff, understands what was done, and continues from where things left off.

These aren't theoretical ideals. They're operational constraints that prevent the entropy that kills AI-assisted projects after the first sprint.

The Operating System Metaphor

Think about what an operating system does for a computer. It doesn't write your applications. It provides the environment — file systems, process management, permissions, scheduling — that makes applications work reliably.

Software projects need the same thing. Not another tool that generates more artifacts. An operating system that makes the artifacts operational. One that travels with the codebase, not with a SaaS subscription. One that any AI tool can read, not just the one that generated it.

That's the category we're building: Project OS.

A portable governance layer that encodes your project's rules, structure, and current state in a format that AI coding tools consume automatically. Generate your PRD, architecture, and work breakdown once — then export a governance instance that enforces those decisions across every session, every tool, every team member.

The artifacts stop being documents. They become infrastructure.

Where This Is Going

We're not the only ones who see this gap. Every team scaling AI-assisted development eventually builds ad-hoc governance — custom CLAUDE.md files, team wikis, onboarding scripts that try to give AI tools context. The problem is that these solutions are fragile, manual, and tool-specific.

The next wave of AI development tooling won't be about generating more. It will be about governing better. Making AI output predictable, consistent, and aligned with decisions that were already made.

Generation got us started. Governance is what gets us to production.

We built Project OS to close this gap. If you want to see what a portable governance layer looks like in practice, read Introducing Project OS or try it yourself.